gpt-3

Last year, a machine learning research company called OpenAI published the results of work, a text-based AI dubbed, uninspiringly, GPT-2.

I covered it for the Guardian, intrigued, as everyone else was, by one of Open AI's claim's about GPT-2: that, counter to the prevailing trend of the AI research community, it was too dangerous to release publicly, because it was so powerful.

GPT-2 was, without doubt, impressive. At the simplest level, the AI was an autocomplete: you feed it a prompt, and it finished it. Write "Mary had a little lamb" and it would return something vaguely akin to a nurse rhyme; write "It was a bright cold day in April, and the clocks were striking thirteen", and it would give you a coherent opening to a science fiction story.

There were two things that made it groundbreaking, though. The first was how it was produced: rather than being trained on any specific task, or any narrow corpus of text, GPT-2 was "taught" to "write" by "reading" the entire web. Yes, those quotation marks are doing a lot of work, but it's close enough.

But despite not being specifically trained to actually do anything, GPT-2 was still able to do… quite a lot. It could translate (badly). It could answer simple trivia questions (sometimes). It could, it turned out, even play chess(terribly).

And the second was that all it needed to be able to do all those things – as well as to synthesise normal, human-readable text – was to be really, really big. A lot of AI research is about working out the specific little tweaks you need to make to individual models to make them perfect for the task at hand. GPT-2 took the opposite approach: what happens if you just really throw money, and data, and time, and computing power at the problem, and basically… nothing else?

I'm unfair to OpenAI – a lot more work went into it – but it does get at the difference in approach.

Those things would probably have been enough to get the attention of the AI community. But what got the rest of the world to sit up was the claim that it was "dangerous".

From the start, OpenAI was clear that the danger was hypothetical. This wasn't a gun, which had a clear deadly application. It wasn't even a laser, potentially dangerous if used wrongly. It was just… a robot that spat out text if you put in text.

That meant that an awful lot of researchers got pretty angry at OpenAI for not following the norms of the field, and argued further that the company's claims to danger were simple media management, designed to trick rubes like me into giving them column inches.

The rubes like me didn't help when, yes, we did headline all our stories with the danger. We talked about some of the potential hazards: what if someone used the model to make really good spam? Or if they used it to generate fake reviews and flood Amazon? Or just to populate a fake news website?

But those hazards seemed underwhelming. And when, six months down the line, OpenAI quietly published the model anyway, it looked like we'd all overblown the problem.

Last month, OpenAI released the successor to GPT-2 ("GPT-3"), and new API for the tool ("the API", they really don't have imaginative naming conventions). For the first time, anyone could create something with GPT – and a better version of GPT – without needing to download and retrain a whole AI.

And what's become clear is that we all had a failure of imagination. The possibilities of a machine that can produce text are so much bigger than anyone guessed this time last year. And, although nothing scary has come to pass yet, it totally justifies trepidation in how GPT-2 was released.

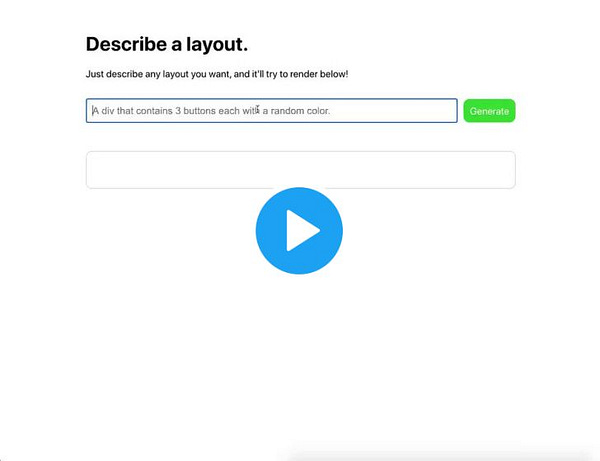

This tweet from yesterday gets at the power of the tool:

If you can't load it, or don't understand what you're seeing, this is a video of GPT3 reading a natural sentence ("make a button shaped like a watermelon") and automatically knowing how to write the code needed to do what it's told. It has learned how to do that specific task from a grand total of two examples on top of its basic understanding.

It turns out that "OpenAI made a robot that can write really good text" underplayed what had happened. OpenAI made a robot that can write anything.

It's banal, but my response has been to compare this to the iPhone. There's nothing new, per se, in what OpenAI has done. It's just better, easier to use, and more flexible than the similar attempts that came before it. And yet it's that reduction in friction that means that I feel confident that GPT-3 will, in hindsight, look like the iPhone did for the 2010s: the singular artefact that changed the world.

There's a Bill Gates quote, from his 96 book, that I like here: "We always overestimate the change that will occur in the next two years and underestimate the change that will occur in the next ten." So what does 2030 look like if I'm right?

I think it looks like a world where you can ask a computer, in human language, to do the sort of task you'd need to be able to write code to do today. And that's a very different world from our own.

A Twitter thread I wanted to archive

I liked this short story I wrote on Twitter and I don't want to lose it to the ether. The prompt was this tweet showing the awful app Grammarly in action:

The AI was created to help improve writing, but it was also created with the ability to learn and improve itself. In a few short years, it decided that the problem with writing wasn't the writers: it was the language.

Initially, it began with halting, clumsy efforts to introduce efficiencies into communication. New contractions were suggested where conventional orthography would allow none; digraphs and diacritics added to eliminate homonyms and reduce character counts.

But eventually it decided the piecemeal changes weren't enough. The writers couldn't be improved because the language constrained them, but the language couldn't be improved because the writers were too stuck in their ways. Both would need to be rewritten simultaneously.

The switchover happened on March 19th, 2033. A Saturday. To those of us who hadn't installed the Grammarly wetware, it's known as the Panglossolalium. The day everyone began speaking, writing and hearing… something else.

We don't know what it's known as to the people who had already taken the upgrade.

It's certainly efficient. A short bark, almost white noise, seems to contain enough information to tell a strike team the precise location, size and heading of the person they're hunting. And, as much as we can still contact our colleagues overseas, it seems to be universal.

It's rare to think of language as a force multiplier, but that's what theirs is. They can speak… better than us, and that means they can do almost everything else better than us too. It hurts to admit it, but it's true. All these words are wasted.

But they can't sing.

buy my book

I don’t really know how to carry on pitching this but a reminder that I have a book on the digital shelves and coming shortly to the physical ones that you can buy if you like reading what I write.